The 6 core elements of big data

With the rise of smart devices, the internet has become a breeding ground for data. Every website and app is tracking user activity so they can improve their user experience. This new breed of digital media and applications has given rise to the term big data. With more and more information being created, stored, scanned, and analyzed, big data is here to stay. However, not everyone understands how or why it is necessary. Here is a closer look at the core elements of big data.

What is big data?

Big data is data that is too large to be processed using traditional computing methods. This includes everything from social media posts to industrial data sets. Major characteristics of big data include its high volume and diversity. Sources of big data include sensors in physical environments, such as air quality monitors; online social media activities, such as tracking conversation dynamics; and massive databases, such as medical records.

There are many benefits of working with big data. For example, with enough data sets collected over time, predictive analytics can be used to make predictions that would be difficult or impossible with smaller data sets. This technology has been used in fields such as finance and insurance, where predictions about customer behavior are important for making informed decisions about marketing campaigns or product offerings. Additionally, businesses can use big data to improve their agility by reducing the time that is needed to conduct data analysis when changes occur in their environment, such as new products being launched.

However, there are also challenges associated with working with big data that must be taken into consideration when planning a strategy for using this technology in your business. These include storage capacity issues; privacy concerns; management and processing challenges; and latency issues caused by huge amounts of traffic on big data infrastructure resources.

Big data is a powerful tool that can be used to gain valuable insights into customer behavior, market trends and more. To make the most of big data, however, it is important to understand the six Vs of big data: velocity, variability, volume, variety, veracity, and value.

The value of big data and the importance of a big data strategy

At its core, big data is simply large collections of data that can be used to glean more accurate insights into customer behavior. This data can be used to develop more effective business strategies, monitor your competition, and identify new opportunities and trends in the market. In fact, many companies are finding that big data is not only valuable on its own, but it also helps to improve customer service and increase customer satisfaction.

With so much data available at our fingertips, it has become easier than ever to track customer interactions across multiple channels and devices. This information can then be used to develop more effective marketing strategies and target audience segmentation. Additionally, by monitoring waste levels in your business, you can identify areas where you are wasting money or resources. This can result in significant cost savings for your organization in the long run.

Another great benefit of using big data is that it can help businesses better monitor their competition. By understanding what their customers are buying and how they’re using their products or services, businesses can stay one step ahead of the competition. In addition, by identifying new opportunities before others do, businesses can capitalize on these trends before anyone else does.

Managing data volume

Data volume is a key factor when it comes to understanding big data. This is the amount of information that is stored in a given space. With big data, businesses can collect and store an unprecedented amount of data in a short amount of time. This has many benefits for businesses, including the ability to make better decisions quickly and improve business processes.

One way that businesses can use big data is by identifying sources of data that they didn’t previously have access to. By understanding the volume and type of data that is being collected, companies can then begin scaling their operations accordingly. In addition, by understanding where all this new data is coming from and how it is being used, companies can optimize their business processes and workflow.

Another important use for big data volume is predictive analytics. Predictive analytics uses historical information to make predictions about future events or situations. This type of analysis can be used for a variety of purposes, such as marketing planning or product development. By using large amounts of historical information, predictive analytics can generate accurate results quickly, which is critical for businesses operating in a fast-paced world.

Machine learning and natural language processing are two more important technologies that rely on big data volume for operation efficiency and accuracy. Machine learning algorithms learn from large amounts of training data without having to be explicitly programmed, making them ideal for tasks such as analyzing huge amounts of text or images data sets. Natural language processing algorithms help us understand human communication in terms of syntax and meaning. Together, these technologies allow us to process large amounts of textual or linguistic information more accurately than ever.

The variety in big data analysis

There’s no single right way to analyze data, which is why it’s important to have a diverse range of data types. One of the most common types of data in big data is text data. This type of data can be analyzed using text analytics techniques such as sentiment analysis or keyword analysis. By understanding how people respond to different pieces of content, you can generate insights that help you optimize your content strategy.

Another common type of data in big data is numerical data. This type of information can be analyzed using traditional statistical methods. By understanding how different variables impact each other, you can create models that accurately predict future outcomes.

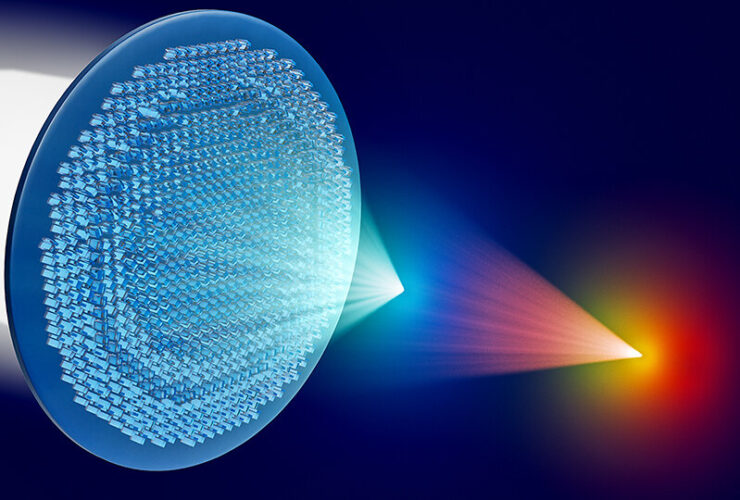

Image and video data is another important type of data. This type of information can be analyzed using machine learning algorithms to identify patterns or trends that aren’t apparent when looking at text or numerical information alone.

By having a diverse set of datasets at your disposal, you can derive a wealth of meaningful insights from your big data analysis. By combining different types of data into custom models, you will be able to make predictions about future events or behavior with incredible accuracy.

Variability: Analyzing the patterns

When you’re working with big data, there’s a lot of variability – both in the data itself and in how it is being used. This variability can be tricky to understand, and it can be difficult to predict or control. However, by understanding the multi-dimensional variability in big data, you can make better decisions and predictions.

One key way to understand multi-dimensional variability is by looking at it in terms of dimensions. For example, a dataset might have dimensions such as time, place, people or things. By looking at the different dimensions of data, you can get a better understanding of how it is varying and how those variations are related to other variables. This information can help you make more accurate predictions about future events or trends.

Another important way to understand variabilities is by viewing them as patterns and trends. By examining specific datasets in more detail, you can identify common patterns or trends that are occurring across the board. This information can help you make informed decisions about your data, such as deciding which datasets should be combined for further analysis and which can be discarded altogether.

Incorporating variable AI into your analysis is essential for understanding complex datasets. AI has the power to learn from experience and adapt its approach accordingly. which makes it perfect for analyzing large amounts of data. By using this technology alongside traditional statistical methods, you’ll be able to get a deeper understanding of your data than ever before. By studying for an online DBA in Business Intelligence from Marymount University, you will gain the tools needed for an exciting career.

Velocity In big data

Velocity is simply the speed at which data is moved, shared and transformed. It also refers to the speed at which data can be processed and analyzed. Velocity can be used for a number of different purposes in big data, including moving data from one location to another, sharing data between teams or organizations, and transforming data into different formats.

The advantages of using velocity in big data include faster decision making and implementation times. This is because velocity allows for quick access to large volumes of data so that insights can be gleaned more quickly. Additionally, using velocity can help to avoid siloed thinking – where different teams within an organization work independently – which can lead to inefficient decision making and slower innovation processes.

Examples of real-world use cases for velocity include accelerating product development cycles by moving consumer insights into product design quickly; streamlining market research by quickly collecting massive amounts of customer feedback; reducing time-to-market for new products by rapidly incorporating user feedback into prototypes; and speeding up ad optimization processes by processing large amounts of video content at a rapid pace.

Velocity is a key component of big data that allows for faster decision-making and implementation times. By leveraging technologies and following best practices, businesses and organizations can take advantage of velocity to increase their efficiency and effectiveness in data processing and data movement.

Veracity: data accuracy and integrity in big data

Data accuracy is imperative in big data. If the data that is being collected is inaccurate or fraudulent, it can have serious consequences for businesses. Not all data is accurate, even if it comes from trusted sources. To make sure that data is accurate and reliable, it must undergo several steps to verify its trustworthiness.

Data that is being collected needs to be relevant to a business and not compiled just because it exists. To verify the accuracy of the data, it should be compared against other sources of information. This includes both structured and unstructured data sources.

It is also important to keep track of how the data is changing to ensure its accuracy and integrity over time. This will require regular updates and monitoring, but it is important for ensuring the trustworthiness of big data collection efforts.

There are several challenges involved in verifying the veracity of big data datasets. These include issues with controlled versus uncontrolled operational environments, assessing reliability across large scales datasets, and determining fraudulent activities in a timely manner. However, by taking these steps into account when collecting and storing big data, accuracy and integrity can be ensured in the long term.

The future of big data analytics

As businesses continue to grow, they need to collect more and more data. This data is then used to optimize processes, gain insights and make better decisions. However, big data isn’t just growing – it’s accelerating. Thanks to the emergence of ever-growing volumes of data, big data analytics is being deployed more and more across businesses to help them get the most out of their data.

With cloud computing, businesses can access their data from anywhere in the world, no matter how large or small the dataset is. This makes it easy for businesses to gain insights from their data regardless of its location. In addition, machine learning algorithms are becoming increasingly advanced, helping businesses make better decisions quickly and easily.

Predictive analytics enables businesses to predict customer needs and preferences before they even occur. This allows businesses to be proactive in meeting customer demands before they become an issue. Additionally, big data is being used for automated decision making, which helps companies make better choices faster than ever before without requiring any human input.

Overall, using big data will enable businesses to anticipate customer needs and preferences better than ever before and gain a competitive edge in the market through better insights and analysis of customer behavior. Big data is an invaluable tool that can help businesses remain competitive in the modern marketplace.

With ever-growing volumes of data, big data analytics and predictive analytics are becoming increasingly common. Automated decision-making tools enable businesses to make good choices quickly. Ultimately, big data will continue to be a key part of business operations in the future, and those who invest in it now will reap the rewards for years to come.